What McKinsey, BCG and Bain Are All Missing About Agentic AI

Dispatches from the Agentic Frontier is a new regular intelligence briefing for leaders in knowledge-intensive sectors. Each dispatch translates evidence from the frontier of agentic AI — practitioner experience, investor signals, strategy research, market events — into what it means for enterprise leaders building for competitive advantage. Some dispatches are field reports from practitioners who are 12–18 months ahead. Others synthesise emerging research. All are filtered through the Intelligence Capital framework developed in The AI Your Competitors Can’t Buy.

Today we look at how the world’s leading strategy firms are advising clients on Agentic AI. They have all published serious agentic AI frameworks, which are worth reading carefully. But they share one gap — and it is the gap that will determine which AI investments prove durable.

Introduction

The past six months have produced an unusually coherent wave of strategic thinking on agentic AI from the world's most influential consultancies. McKinsey has published several substantive pieces on Agentic AI and AI transformation. BCG has laid out an agentic AI roadmap for CEOs. Bain has declared a "Code Red" enterprise moment. These are not lightweight thought leadership. They are serious frameworks, built on real client experience, pointing in the same direction.

I recommend all of them.

One gap runs through all their materials — and it has direct consequences for how executives and capital allocators should assess the strategic bets they are making right now.

What the Consensus Gets Right

The core argument is consistent and, for the most part, correct. AI is no longer a productivity tool bolted onto existing workflows. It is becoming the operating layer through which work gets done. The firms moving fastest are not experimenting at the margins — they are rewiring entire domains, deploying agents end-to-end, and treating AI transformation as a business redesign challenge rather than a technology programme.

The urgency is real and the peer evidence backs it up. In banking JP Morgan is spending $2 Billion a year on AI - a rewiring of a 200-yeear old institution. Insurance is looking to catch up. Allianz Partners, for example, has deployed end-to-end agentic claims automation across 30 countries and five insurance lines — not a pilot, a commitment. Chubb, one of the most profitable enterprises in the sector, has publicly pledged to automate 85% of major underwriting and claims processes within three years, with 3,500 engineers deployed.

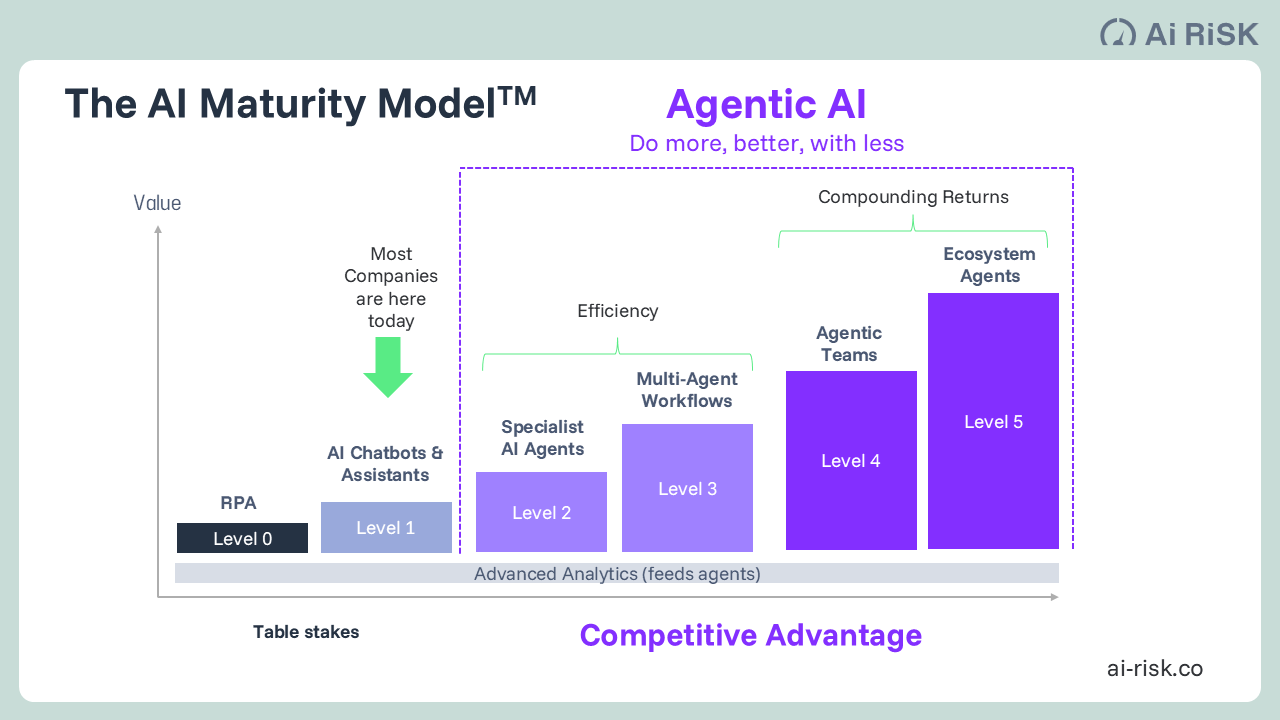

These are not incremental moves. They establish a new baseline for what the efficiency layer — Levels 1 through 3 of AI maturity — looks like at serious scale (see chart below).

The consultancy consensus is right that this layer is not optional. Executing it with urgency generates real bottom-line impact, builds the data substrate that more advanced AI requires, and funds the broader transformation. Any organisation not moving with the pace JP Morgan, Allianz Partners, Chubb or a myriad of smaller companies across multiple sectors are demonstrating is accumulating a deficit that will be visible in key metrics within three years.

Where I part company with the consensus is not in its diagnosis of the efficiency layer. It is in what happens next.

Where Every Framework Stops

Each firm gets close to a more fundamental insight — and then stops.

The shared stopping point is this: all three frameworks describe what advantage looks like without identifying the mechanism that makes it permanent rather than merely temporary.

BCG identifies that the new competitive currency in the AI era is proprietary intelligence embedded in the organisation's AI systems. Bain recognises that competitive positioning will be determined by the cumulative duration and velocity of learning, not by scale alone. McKinsey notes that the ability to embed an organisation's expertise and institutional knowledge into agentic AI systems could become core to its intellectual property.

These are not peripheral observations. They are the most important assertions. And in every case, the framework moves on without following them to their logical conclusion.

More details of the AI Maturity Model and where/how Intelligence Capital is accumulated here: https://www.ai-risk.co/our-insights-agentic-enterprise/the-ai-your-competitors-cant-buy

Why? Because all their frameworks are built primarily from the deployment experience of what I call the efficiency layer — Levels 1 to 3 of AI maturity (see chart above). At that layer, the observations above are true but incomplete. Deploying agents faster and more broadly than competitors does create a proprietary intelligence advantage, for a time. But it is a temporary advantage, because L1–L3 systems — however well deployed — are fundamentally replicable. Platform to enable them can be purchased. The workflow redesign can be copied. The efficiency gains are real, but they are heading, as McKinsey's own analysis implies, toward an industry-wide baseline.

Their frameworks cannot see beyond this horizon because they have not been built from experience of what lies beyond it.

The Mechanism They Are All Circling

There is a specific architectural distinction that none of the published frameworks yet contains, and it changes the strategic calculus entirely.

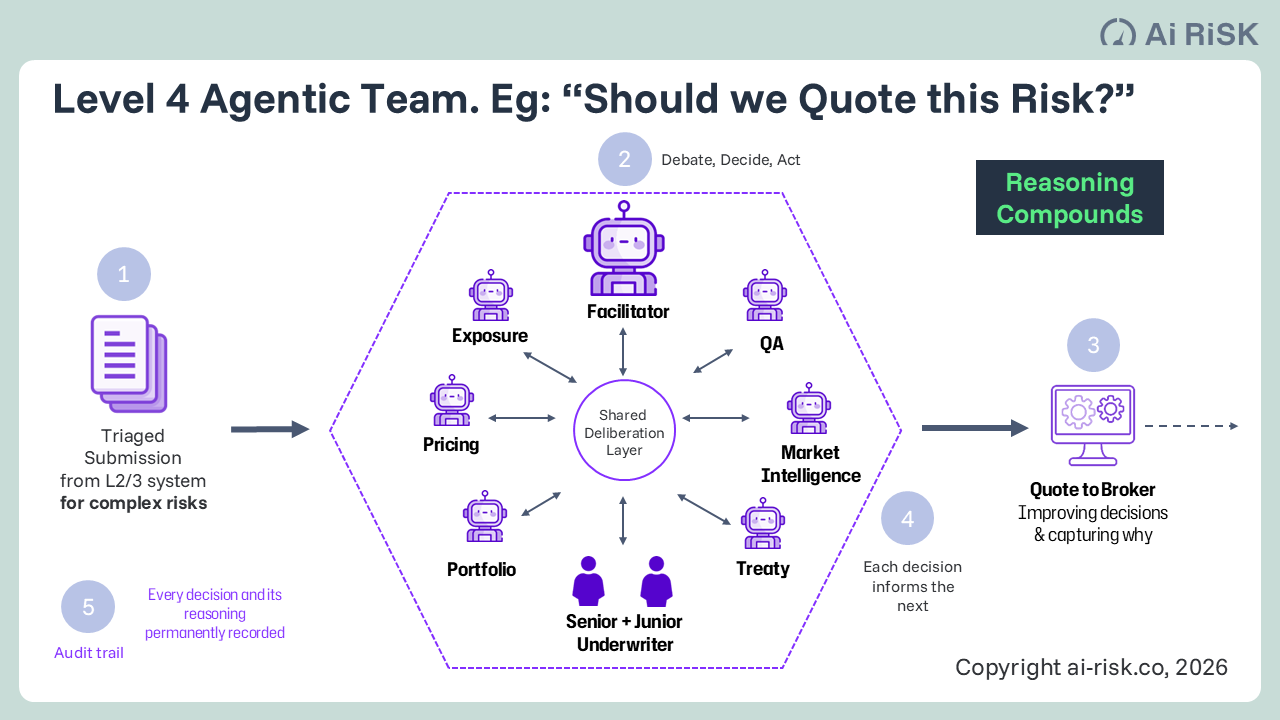

At Level 4 — deliberative multi-agent architecture — the system does not simply automate outcomes. It produces a reasoned record: why a decision was made, what trade-offs were weighed, what information was considered, what was rejected and why. The fiftieth decision is informed by the reasoning embedded in the first forty-nine. Patterns emerge that no individual expert ever held — not because the AI is smarter, but because it remembers everything and connects everything across every cycle.

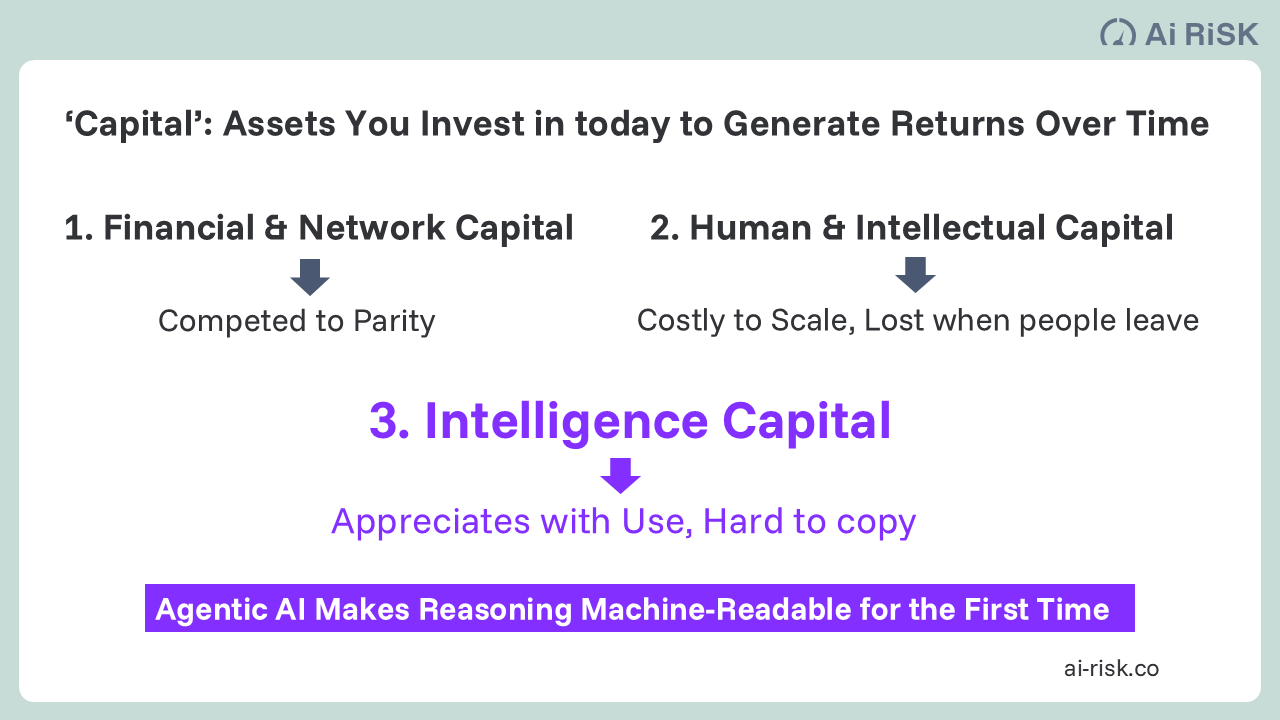

This is Intelligence Capital (‘IC’). And its defining characteristic is that it is time-dependent in a way that no previous technology wave has been.

More detailed description of Intelligence Capital here: https://www.ai-risk.co/our-insights-agentic-enterprise/the-ai-your-competitors-cant-buy

There is a further distinction that matters here, and which none of the published frameworks makes explicit. The statistical learning embedded in Level 2 and Level 3 systems lives in the vendor's model weights — not in your organisation. It is not auditable, not transferable, and it disappears if you change platform.

A newer category of enterprise tool does generate a form of organisation-owned intelligence — encoding expert working patterns as machine-executable instructions that improve with each run. That form is real. But it is replicable: every competitor who buys the same licence accumulates the same category of knowledge simultaneously. It captures how your experts work.

Deliberative IC captures why decisions were made — the reasoning behind each judgement, the trade-offs weighed, the cases from which the pattern was drawn. That record is specific to your operational history, over your elapsed time, on your decisions. No licence purchases it. No competitor reconstructs it retroactively.

The deliberation history that Level 4 generates lives permanently in your organisation, regardless of which technology generated it. This is why even sophisticated L2/L3 automation, however capable it appears, does not build Intelligence Capital. The asset is different in kind, not just in degree.

An example of the type of L4 Agentic Team AI Risk has been deploying for clients. It leverages a unique swarm algorithm and an approach to memory management to enable agents to learn rapidly on the job through deliberation with each other and human workers.

A competitor who deploys the same L4 platform in eighteen months can acquire the enabling technology. They cannot acquire eighteen months of deliberation history. They cannot run last year's decisions retrospectively. They cannot compress the compounding that has already occurred. They start at zero while your system has been accumulating for eighteen months. A competitor who starts later can close the gap in principle — but only by outpacing the early mover's ongoing accumulation, not merely matching their starting investment.

That is a structurally harder problem than most organisations currently appreciate, and it grows harder with every month of delay.

This is what Bain is pointing at when it identifies ‘cumulative duration’ (the total, accumulated time an organization has spent developing, training, and deploying AI models and agents) as the new competitive variable. But it applies that observation to deployment velocity — how fast you roll out agents across processes — rather than to the more fundamental question of what those agents are accumulating as they operate over time.

Deployment velocity is an efficiency layer concept. Intelligence Capital is a deliberation layer concept. They are different things, and the distinction is what makes the strategic difference.

The same boundary appears in the most rigorous independent strategic thinking on AI. Professor Roger Martin's Strategy Choice Cascade, applied to AI-augmented enterprise strategy, correctly identifies proprietary data accumulation as the source of durable AI advantage — his John Deere example makes the point precisely: its See & Spray technology works as a strategic differentiator because Deere's AI is trained on datasets that competitors cannot replicate. That is a structurally sound observation.

But proprietary data is a statistical learning concept. It describes what the model was trained on. Deliberation history is something different: it is a record of why decisions were made, what was weighed, what was rejected, and by whose reasoning — accumulated case by case, owned permanently by the organisation, and independent of whichever platform generated it. Even the most careful application of strategy frameworks to AI stops at the data layer. Intelligence Capital begins where the data layer ends.

The L1–L3 efficiency layer automates outcomes. The L4 deliberation layer captures reasoning. The first is necessary. The second is where durable advantage lives.

The Practitioner Gap

This distinction does not emerge from desk research, client surveys, or interviews with organisations running efficiency-layer deployments. It only becomes visible when you have been inside L4 systems in production — observed what a truly deliberative multi-agent architecture actually produces over extended operation, what the reasoning record looks like after hundreds of decisions on complex cases, and what it means for the organisation that owns that record.

The consultancy frameworks described above are built from experience that is, by and large, L1–L3 in depth. The frontier of what advanced agentic systems are actually capable of — what they accumulate, what compounds, what becomes genuinely irreplicable — is not yet present in any published advisory framework.

This is a structural lag that is normal in any fast-moving technology field. It has direct consequences for decision-makers relying on the consensus view to set their AI strategy.

Specifically: a framework built from L1–L3 experience will correctly identify the need to move fast, go deep, and rewire entire domains. It will not ask the question that matters most at L4 — which decisions in this organisation are complex enough, repeated enough, and consequential enough to benefit from deliberative reasoning accumulation? And it will not identify time as the irreplaceable strategic variable, which means it will not communicate the cost of delay in terms that are actually accurate.

What This Means for Executives and Capital Allocators

The efficiency layer is non-negotiable. Execute it with the urgency the peer evidence demands. It funds the broader programme, builds the data substrate, and establishes the operational foundation that deliberative AI requires.

But the consultancy consensus, taken as the complete strategic picture, will lead organisations to optimise L1–L3 without ever asking the L4 question. The investment screen of "which assets are advancing up the staircase" finds the right assets for the efficiency layer. It is the wrong screen for Intelligence Capital.

The right screen is different: which organisations are deploying deliberative AI architecture on their highest-value, most complex, most repeated decisions — and how long have they been doing it? That question does not yet appear in any published investment or strategy framework. The organisations asking it are not announcing it, because there is no competitive advantage in announcing it.

For executives, the IC question has three practical components. First, which decisions in your organisation are genuinely deliberative — where reasoning, trade-off, and judgement are the differentiating inputs, not just speed or accuracy? These are the decisions where L4 deliberation accumulates proprietary value over time: complex underwriting, product and service innovation, capital allocation, strategic risk selection. Second, how much of that reasoning is currently captured anywhere — in systems, in records, in institutional memory — and how much leaves the building when experienced people leave? Third, what would it mean for your competitive position if a peer began capturing that reasoning systematically today and you did not?

The Window

The traditional consultancy consensus correctly identifies that the organisations pulling ahead in AI will be those that move with the most urgency and the most depth. That is true, as far as it goes.

The fuller picture is that urgency and depth at the efficiency layer buys you a defensible position at L1–L3 — for a period. What it does not do, on its own, is build the kind of proprietary advantage that appreciates over time rather than converging toward market parity.

Intelligence Capital is not theoretical. It is in production, in regulated sectors, today (we know, because we are at the forefront of this implementation). The technology is proven. The compounding is measurable. The gap between organisations that are accumulating it and those that are not is already widening — quietly, without announcements, in the decisions that most determine performance.

The window between current production reality and published advisory consensus is real. It will not remain open indefinitely. The winning position is to ask the L4 question before your closest competitors do.

Simon Torrance is founder of AI Risk, an agentic AI advisory and innovation firm. He advises leaders of firms in knowledge-intensive sectors like insurance and financial services on the Intelligence Capital framework and the Agentic AI Accelerator programme. Dispatches from the Agentic Frontier is published periodically on LinkedIn. Previous issues and the full IC framework: ai-risk.co/insights