Morgan Stanley Has 60 Live AI Deployments. But No Durable AI Advantage.

Dispatches from the Agentic Frontier is a new regular intelligence briefing for leaders in knowledge-intensive sectors. Each dispatch translates evidence from the frontier of agentic AI — practitioner experience, investor signals, strategy research, market events — into what it means for enterprise leaders building for competitive advantage. Some dispatches are field reports from practitioners who are 12–18 months ahead. Others synthesise emerging research. All are filtered through the Intelligence Capital framework developed in The AI Your Competitors Can’t Buy.

Today we do a deep-dive analysis on Morgan Stanley’s AI strategy and deployments to elicit lessons for leaders across any sector.

Introduction

Morgan Stanley is the most instructive AI case study available to any leader of a knowledge-intensive business.

It has documented, more publicly and precisely than almost any comparable organisation, exactly how far excellent execution across four architectural layers of AI deployment can take you — and its former head of AI has published a framework that identifies, with precision, the one layer that determines whether any of it compounds.

His advice on what to do with that layer is the most important question in enterprise AI strategy today. It deserves a direct answer.

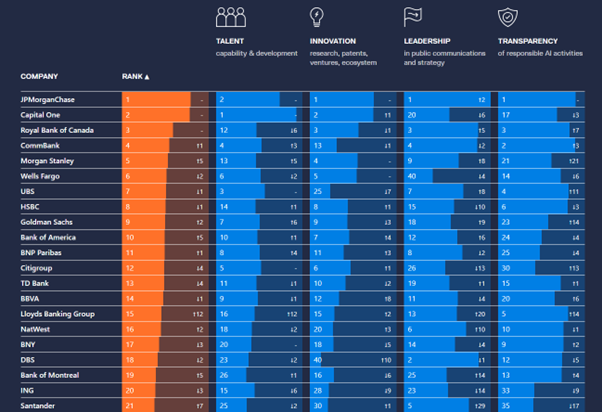

Where the most advanced organisations now stand

Morgan Stanley ranked tenth on the Evident AI Index in 2024 — the first time it had entered the global top ten. In 2025 it jumped five places to fifth. The top ten banks on that benchmark are now improving at 2.3 times the rate of the rest of the industry. That gap is not narrowing. First movers are pulling away.

Source: Evident Insights: https://evidentinsights.com/ai-index/

If you lead a knowledge-intensive business outside banking — in insurance, asset management, professional services, or any sector where expertise and judgment drive value — the Evident index is a userful early warning system. The most AI-advanced banks are approximately three years ahead of the most AI-advanced insurers. What Morgan Stanley is doing today, your sector will be attempting in 2027.

Will you replicate what it has done, or learn from what it has not yet done?

What genuine operational transformation looks like

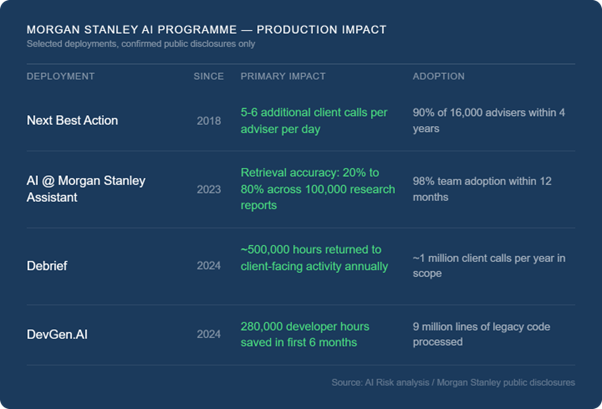

Morgan Stanley's Next Best Action recommendation engine has been running since 2018. Over 90% of its 16,000 financial advisers adopted it within four years. The result: five to six more outbound client calls per adviser per day. In wealth management, contact frequency is the single strongest predictor of asset retention. Five to six more calls per adviser per day is a revenue architecture.

The AI @ Morgan Stanley Assistant achieved 98% adoption among adviser teams within a year of full deployment, raising document retrieval accuracy from 20% to 80% across 100,000 proprietary research reports. Debrief, launched in June 2024, saves approximately 30 minutes of administrative work per meeting across a division conducting roughly one million client calls annually — half a million hours per year returned to client-facing activity. DevGen.AI processed nine million lines of legacy code and saved 280,000 developer hours in its first six months in production.

More than 60 live use cases are now deployed across wealth management, operations, and institutional banking.

This is not a pilot portfolio. It is what industrial-scale operational transformation looks like.

The framework that identifies the right layer

Jeff McMillan, Morgan Stanley's former firmwide head of AI, published a six-layer enterprise AI architecture framework in March 2026. It is the most rigorous public guide to AI infrastructure available to leaders making these decisions today.

His central diagnosis is correct and undersupported across the industry: most organisations are debating models (his Layer 4) and applications (his Layer 6) while underinvesting in the layers that make both useful at scale — data quality, governance, and agentic orchestration. His description of Layer 5, the orchestration layer, as "the operating system for enterprise AI" is the most precise phrase in any public document on the subject. His claim that "Layer 3 and Layer 5 are where the real enterprise value is either unlocked or lost" should be on the wall of every technology leadership team planning an AI programme.

The framework is correct as far as it goes.

But it stops one step too soon.

The recommendation that contradicts the diagnosis

McMillan's advice on Layer 5 is explicit, and his own words are worth examining precisely.

On orchestration infrastructure: "Right now there are no proven players that will work across the seven-layer stack... so what do you do? You work with the hyperscalers that are in place today — the Microsofts of the world, Google or AWS."

On the build versus buy decision: "I would similarly suggest that you buy an agent frame because you're going to get all of these controls. You're going to get a monitoring framework. Sure, you could build that, but I don't think that's what you want to build." And: "I personally — even if I'm a large firm — I'd probably buy it because it's so commoditised."

He frames this as a pragmatic interim position: no startup has yet proven horizontal orchestration at scale, so work with what exists. That is an honest assessment of today's market. But it is not a strategic argument, and the distinction matters, because interim positions have a way of becoming permanent ones.

McMillan understands exactly what is at stake at Layer 5. His own definition: "an agentic layer is a firmwide AI orchestration capability that is able to not only manage work within a vertical, but is able to manage work horizontally across an organisation." He describes it as the place where "the intellectual capital and individual decision-making of people" gets encoded at an organisational level.

He has identified, with precision, the mechanism by which an organisation builds durable advantage from AI. But his pragmatic advice routes that accumulation through infrastructure owned by platforms whose commercial model depends on making the arrangement as permanent as possible.

The interim position is how the dependency begins. Three years in, it is simply the dependency.

When an organisation runs its orchestration layer on infrastructure it does not own, the decision logic, deliberation memory, and domain-specific reasoning flowing through that layer accumulates on someone else's terms, subject to repricing, restructuring, or commercial sunsetting at the platform's discretion. Model capability is an input you use. Cloud infrastructure is an input you use. The orchestration layer is where your organisation's accumulated judgment lives: what it has decided, how it decided it, and what it learned.

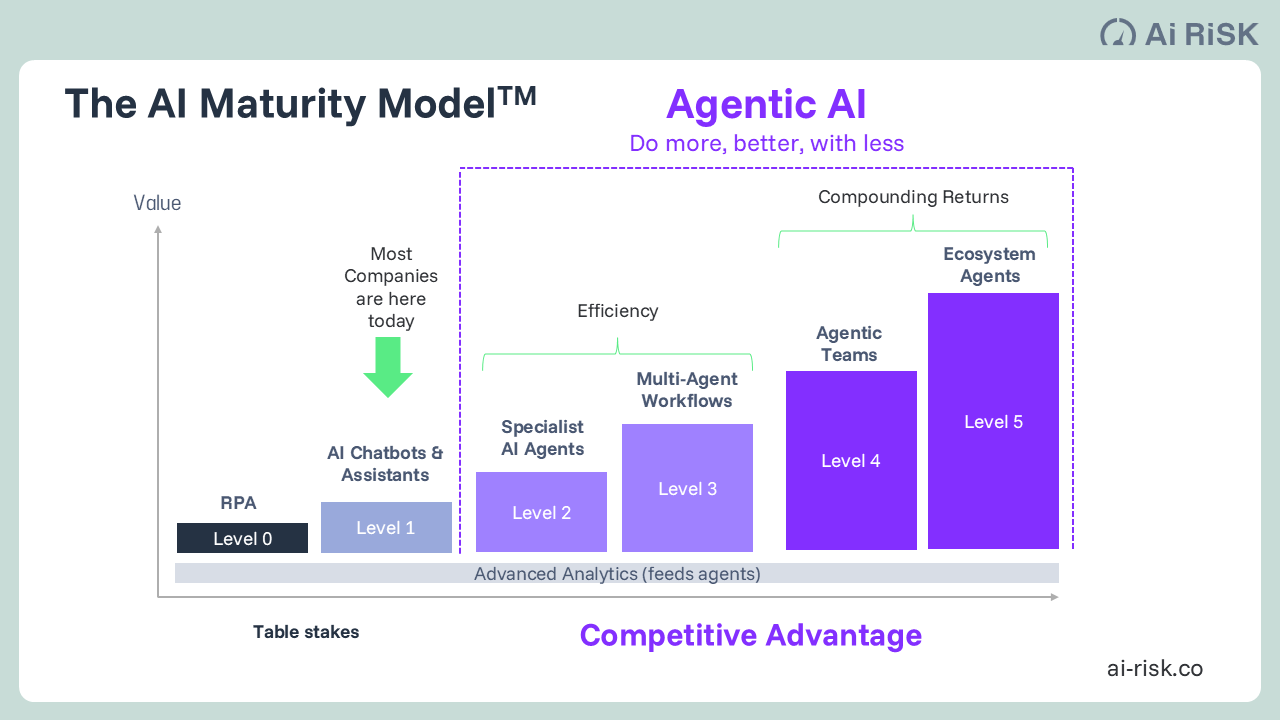

Every investment in automation and workflow tools eventually produces the same result — a capability competitors can replicate within months by purchasing the same platform.

We call this the Parity Problem. The organisations that discover the orchestration dependency are typically eighteen months in, having built impressive demonstrations without the compounding advantage they anticipated. The tools did not fail. The layer where advantage accumulates was never owned.

In our AI Maturity Model (above) Levels 0-3 create ‘Depreciating AI’: value comes from processing speed and cost reduction; competitive advantage erodes as others adopt the same tools; learning is local and siloed; when the vendor updates the model, learned behaviour may reset. Levels 4+ is where ‘Appreciating AI’ is generated: value comes from institutional intelligence accumulation; competitive advantage compounds as first-movers widen the gap; learning is cross-functional and organisationally persistent; the organisation owns the intelligence; it transfers across platform. More here: https://www.ai-risk.co/our-insights-agentic-enterprise/the-ai-your-competitors-cant-buy

There is a further distinction worth making explicit. Owning the orchestration layer is the architectural precondition. What generates the highest-value form of Intelligence Capital within it is deliberative capability: AI systems that reason through genuinely complex cases from multiple expert perspectives, capture the reasoning behind their conclusions, and encode expert overrides in structured form.

This is categorically different from workflow automation that logs outcomes. One records what was decided. The other records why, and compounds with every subsequent decision. This is what AI Risk spends its time building for clients.

The evidence confirms it

Goldman Sachs ranked eleventh on Evident in 2024, below Morgan Stanley. It climbed back to ninth in 2025, still below Morgan Stanley on overall ranking. The most significant architectural difference between the two programmes: Goldman has built a named, proprietary, multi-model platform — the GS AI Platform — routing work across OpenAI, Google Gemini, and Meta's LLaMA with no single-vendor dependency.

Morgan Stanley runs its disclosed AI programme exclusively on OpenAI. Both programmes are impressive. The question they pose is whose accumulated reasoning each firm will own in five years.

The most senior AI leaders at some of the world's most advanced financial institutions have reached the same architectural conclusion independently: the orchestration layer must be owned. These are not small firms feeling their way. They are organisations with the resources and expertise to evaluate every available option. They have chosen not to cede this layer.

The decision facing leaders

Buying inference capability from the best model provider is correct. Buying cloud infrastructure from the most capable enterprise platform is correct. Neither of those is the decision this article is describing.

The specific decision is this: who owns the decision logic, deliberation memory, and domain-specific orchestration that your AI programme generates? Those three elements are the asset. The AI stack your organisation builds over the next two years will either accumulate that asset in a layer you own permanently, or it will accumulate it in a layer you are licensing from a platform that has every incentive to make the dependency irreversible.

Morgan Stanley's programme is genuinely impressive. McMillan's framework is the most useful public architecture guide available. What neither resolves is whether what is being built will compound.

The IC thesis developed in this series comes from direct experience with Level 4 agentic deployments in production — not from modelling what they might achieve. The gap between what those systems generate and what the most sophisticated Level 3 programme produces is what produced the thesis. That gap is larger than the published research on AI maturity yet acknowledges.

That question is yours to answer.

Simon Torrance is CEO of AI Risk and author of the Intelligence Capital thesis. AI Risk advises knowledge-intensive organisations on agentic AI strategy and implementation.